This week was routed for cardio training and loaded muscle training. The efforts were pushed and progress were shown clearly.

As for Work, or to be specific, LLM and other ML tools or model. Here is the details:

- stable diffusion models:

- nexblend: lucid,vivian,aurora,and iris models.

- popular tags: loli,petie,chibi,child,lolita fashion, topless, imminent rape,streaming tears

- chatgpt 5.5 thinking models: for daily fact check and opinion generation articles

- grok 4.20 expert model: for substance of the chatgpt models, especially for nsfw content or illicit content.

- gemini 3.1 pro model: for health related task, personal goal achievement, fitness training, nutrition guide.

- claude opus 5.7 & sonnet 4.6 model: no use case for week 18, inactive problem solving week.

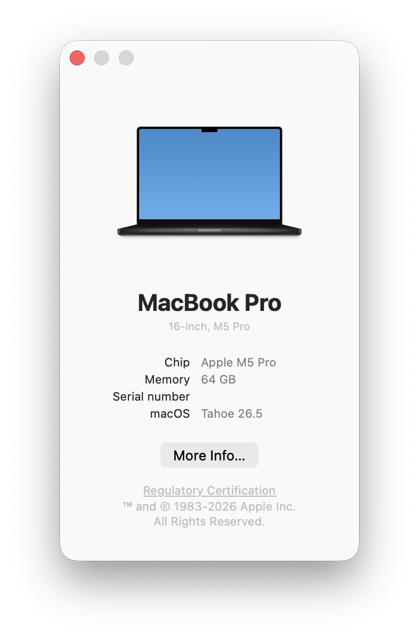

As for hardware upgrade, this week was the start week for new M5 PRo chip with 64GB unified memory experience. Below is a screenshot of my ComfyUI interface after setup: